Almost Half of AI-Written Code Fails in Production — Here's Why That Matters

A new report found that 44% of AI-generated code breaks when it hits real users. Not in testing. In production. Here's what's actually going on and what engineers should do about it.

Almost Half of AI-Written Code Fails in Production — Here's Why That Matters

A report dropped this week that most developers scrolled past.

Lightrun surveyed 200 senior engineering leaders across the US, UK, and EU. The finding that stood out: 44% of AI-generated code changes still fail when they reach real users in production.

Not in your local environment. Not in staging. In production, with real users.

Let's talk about why that's happening — and what it means for you.

The Problem Nobody Is Talking About

Everyone is focused on how fast AI writes code.

Nobody is asking: how much of it actually works when it matters?

The report found three things going wrong:

1. Teams need 2-3 extra deployment cycles just to confirm an AI fix worked. You ship. It breaks. You fix. You ship again. And again. That's not faster — that's just moving the problem downstream.

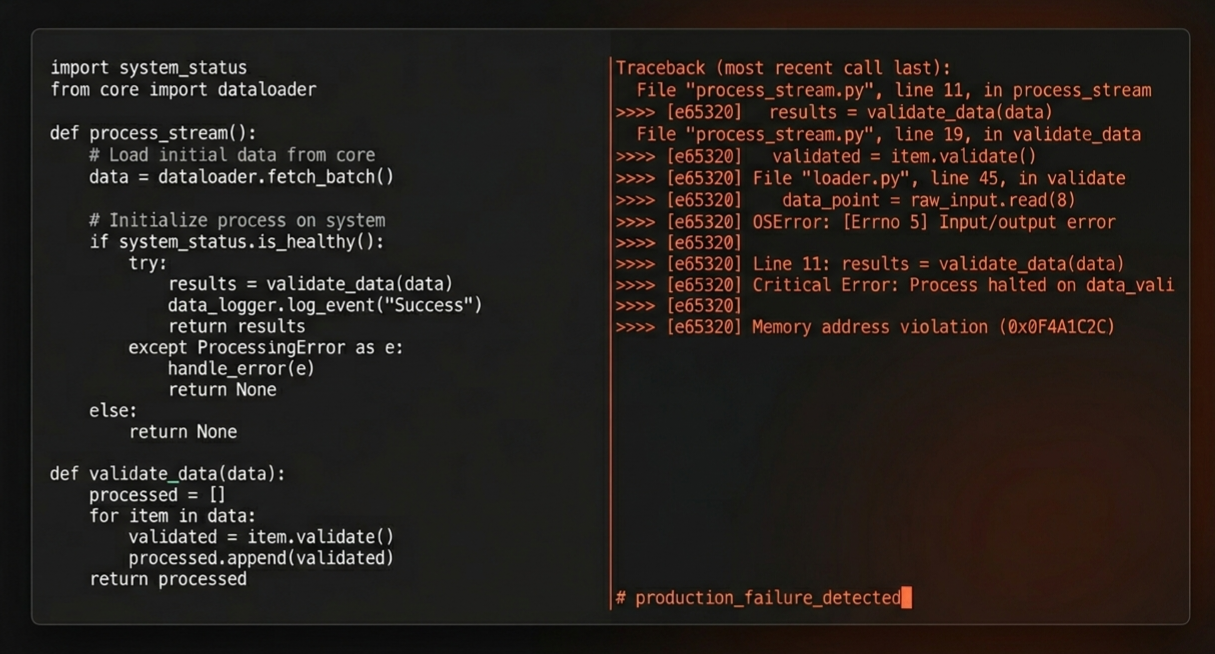

2. 60% of engineering leaders say they can't see what's happening inside their systems at runtime. AI agents reason their way to a solution using probability. But if the agent can't see what's actually running in production — real memory usage, real request flows, real variable states — it's guessing. Confident guessing, but still guessing.

3. 54% of serious production incidents are still being fixed using tribal knowledge — not AI tools. When things go badly wrong, engineers reach for the person who's been around for 5 years, not the AI. That tells you something.

Why This Happens

The core issue is simple.

AI agents are trained on code. They are not trained on your production environment. They don't know your traffic patterns, your infrastructure quirks, your edge cases, or the weird workaround your team added in 2022 that nobody documented.

When an agent fixes a bug, it fixes it based on what the code looks like. Not based on what actually happens when 10,000 users hit it at the same time.

That gap — between how code looks and how it behaves — is where failures live.

What's Actually Changing For Engineers

Here's the shift happening right now on real teams:

Before AI tools: Write code → test locally → review → ship → monitor

With AI tools today: Generate code → review AI output → test locally → verify in staging → ship → monitor → debug when it breaks → figure out if it was the AI's code or your review that missed it

The loop got longer, not shorter. At least for now.

The Stanford 2026 AI Index backs this up — AI is boosting software engineering productivity by 26% on average. But those gains are not evenly distributed. They show up in writing code. They are barely showing up yet in running code reliably.

What Engineers Should Actually Do With This Information

This is not an argument to stop using AI tools. It's an argument to use them more carefully.

Stop trusting AI output at the unit test level only. If AI writes the fix and AI writes the tests for its own fix, you have a closed loop that doesn't catch production behavior. Test it like you'd test a contractor's work — with skepticism.

Know your system better than the AI does. The 54% of incidents still being solved by experienced engineers exist because context matters. Your observability setup, your service dependencies, your historical failure modes — that knowledge is yours and it's valuable. Don't let it atrophy.

Make AI earn its place in production, not assume it. Use it in your local flow. Use it for scaffolding and drafts and explanation. Be more careful before you let it touch production-critical paths without serious human review.

The Bigger Picture

Here's what these numbers actually say:

AI is genuinely useful. It is also genuinely unreliable in production. Both things are true at the same time.

The engineers who figure out how to use AI well for the fast stuff while staying sharp on the hard stuff — observability, system design, production debugging — are going to be the most valuable people in any engineering team over the next two years.

The engineers who assume AI handles it all and let those skills slide are going to have a very bad time when production goes down at 2 AM and the agent can't help.

Bottom Line

44% failure rate in production is not a reason to panic. It is a reason to pay attention.

AI writes code faster than you. It does not understand your production environment better than you. That gap is your value — protect it.

Written by Abdul Momin · Full Stack Software Engineer